AI’s impact on everyday technology is undeniable. However, the discussions surrounding its rapid growth often focus on its application and context. The U.S. Air Force, for instance, is employing AI in numerous impactful ways, enhancing efficiency and simplifying logistics. Nonetheless, caution is essential when incorporating AI into military operations. The AI Guardrails Act, proposed by Michigan Senator Elissa Slotkin in March 2026, aims to ensure that human oversight remains integral to AI applications in the military.

As highlighted in an official government release, Senator Slotkin outlined that her proposed legislation centers on three crucial objectives: “Ensuring a human is involved when lethal autonomous weapons are fired, outlawing AI’s use for spying on U.S. citizens, and maintaining human control over nuclear weapon launches.” These initiatives are designed not to stifle the progress of the U.S. AI sector but to secure its leadership while promoting safe and responsible advancements. “We must win the AI race against China,” stressed Senator Slotkin.

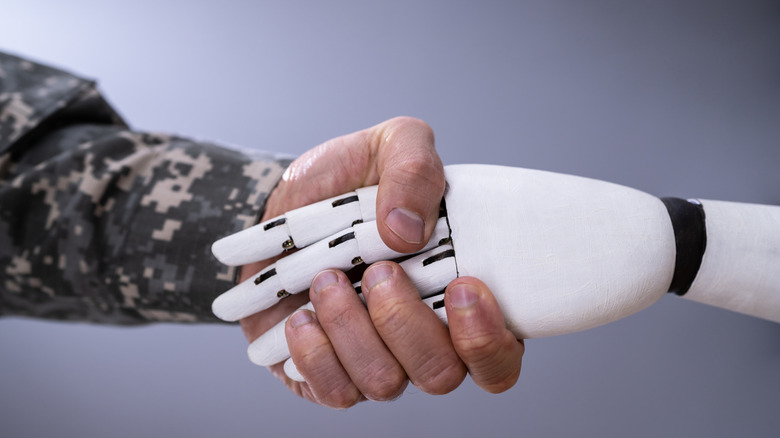

AI errors, glitches, and malfunctions can occur, and while human decisions also have their shortcomings, combining human judgment with AI capabilities offers a path forward. Here’s how this legislation could benefit the United States.

Further Insights into the AI Guardrails Act

In elaborating on the provisions of the AI Guardrails Act, Senator Slotkin asserted that establishing limitations on the technology’s capacity to operate lethal autonomous weapons without human oversight, forbidding nuclear weapon launches by AI, and prohibiting its role in mass surveillance represent “common sense” measures. These principles may resemble previous concepts, as reflected in Department of Defense Directive 3000.09, which advocates for infusing human judgment into the use of military force. Consequently, automated systems, like the Navy’s Phalanx CIWS, can identify targets but require human authorization before engaging.

The objective of this legislation is essentially to render these three uses of AI unlawful. The reasoning behind this approach is articulated in the document itself: “Certain military decisions are too risky and significant for machines to determine.” This also serves to maintain clarity and accountability in military actions, which can otherwise become ambiguous when AI operates with increased autonomy.

This legislative effort marks a noteworthy progression in adherence to the five principles of ethical AI, which were incorporated into the department’s AI development strategy in February 2020. These guiding ideals emphasize that AI should be equitable, governable, reliable, responsible, and traceable. At the time of writing, the bill is new to the legislative agenda, making its reception among fellow lawmakers uncertain. Nevertheless, it presents a crucial opportunity to guide the evolution of AI within one of its most potentially challenging domains.